Tier 1: AI Crawler Access

Checks your robots.txt for explicit AI crawler permissions and validates your llms.txt structure and sitemap. Covers GPTBot, ClaudeBot, PerplexityBot, Google-Extended, and six other crawlers by name.

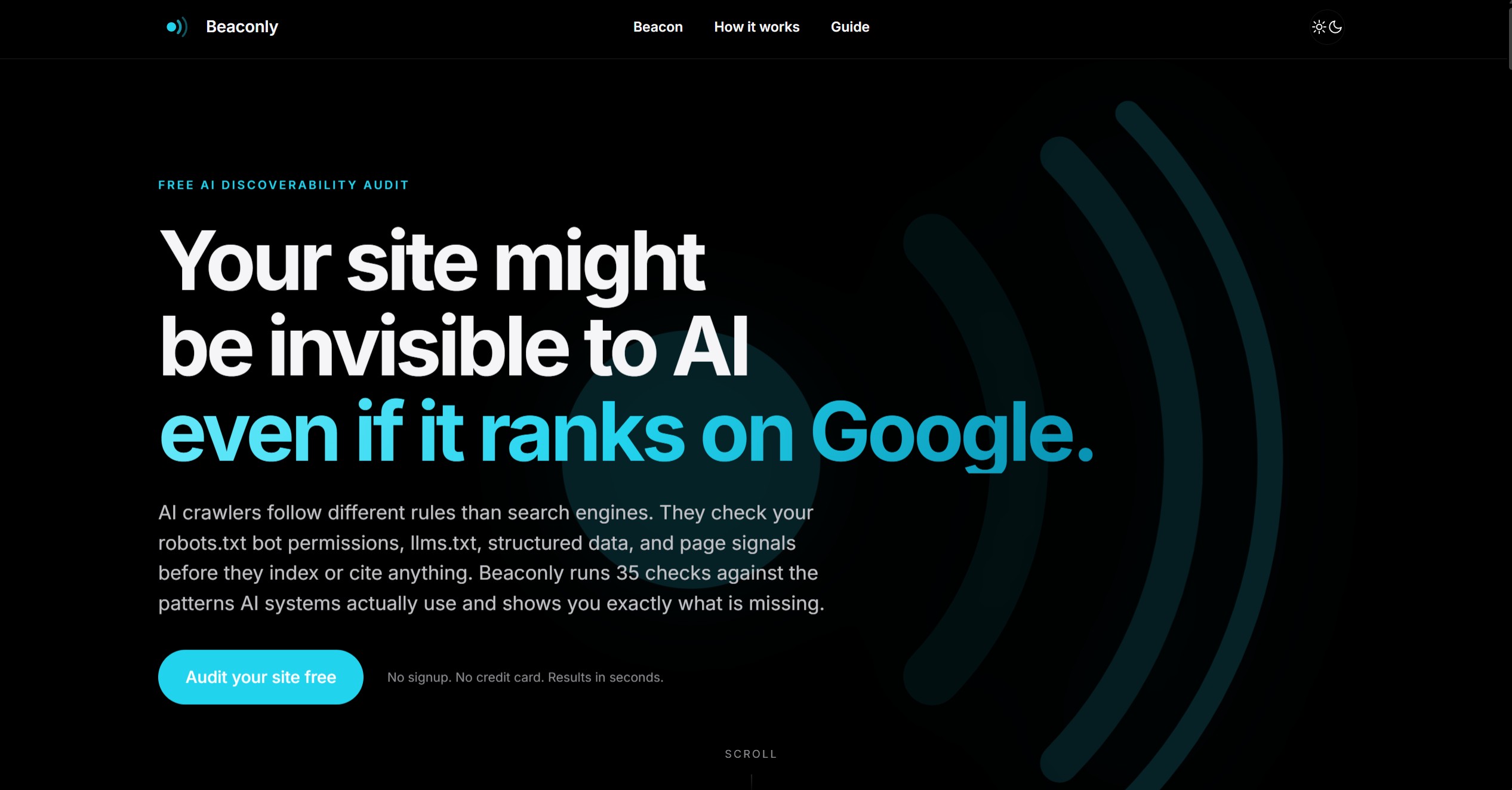

Beaconly checks your robots.txt, llms.txt, structured data, and page signals against the configuration patterns that AI crawlers expect. Enter any URL to get a full report with specific fixes.

What we check

Beaconly runs checks across the three areas AI crawlers actually look at when deciding whether to index and cite a site. Each check returns a specific pass or fail with a fix you can act on immediately.

Checks your robots.txt for explicit AI crawler permissions and validates your llms.txt structure and sitemap. Covers GPTBot, ClaudeBot, PerplexityBot, Google-Extended, and six other crawlers by name.

Inspects your JSON-LD for the entity signals, freshness markers, and content types AI systems use when deciding what to cite. Checks Organization identity, sameAs links, dateModified, FAQPage schema, and more.

Reviews your meta tags, heading structure, Open Graph data, canonical URL, HTTPS, and response speed. These are the page-level signals AI crawlers read before anything else.

Every failed check returns an explanation of why it matters and an exact fix you can implement. No letter grades or vague scores - just what is wrong and how to address it.

Built with

Beaconly uses a Cloudflare Worker to fetch and analyze each target domain server-side, with all checks running in parallel. The frontend is vanilla HTML, CSS, and JavaScript with no build step and no external dependencies.

FAQ

Built by Orygn

Orygn builds custom software, security tooling, and infrastructure-level systems. Beaconly came out of the same work we do for clients, applied to the problem of making sites discoverable to the AI systems people are increasingly using to find information.

Work with Orygn